We have had to make some changes to where we host various OLab services.

Main Sites:

Moodle for OLab – courses, examples – most students and teachers who are using OLab in a course or workshop will come here.

Support, blog, how-to pages, contact info – our main WordPress page with general information relating to OLab.

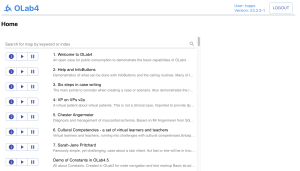

OLab Player – use ‘guest’ as username and pword if you don’t have one – this is the link to our main OLab server, when you want to play cases directly, not via Moodle.

OLab Designer – login credentials required – for authors, teachers, course designers. You must have a specific login.

Developer services:

Dev server – player – this is our test and development box. Don’t put production cases or course materials on here. There is no guarantee of stability, uptime or backups.

Dev server – designer – this is for authoring cases on our test box.

CURIOS video mashup service – this is for integrating YouTube videos into your course materials.

Help files – general and specific help for OLab.

Other services

We still have our other servers running on the University of Calgary virtual machines. It has recently become difficult to maintain these in a stable form, hence the move of some of these services. We will update you if this improves.

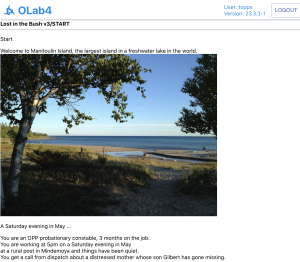

demo.openlabyrinth.ca – Openlabyrinth v3.5.1 – pretty slow these days but still mostly works.

https://demo.olab.ca/ – currently offline

https://dev.olab.ca/ – currently offline